|

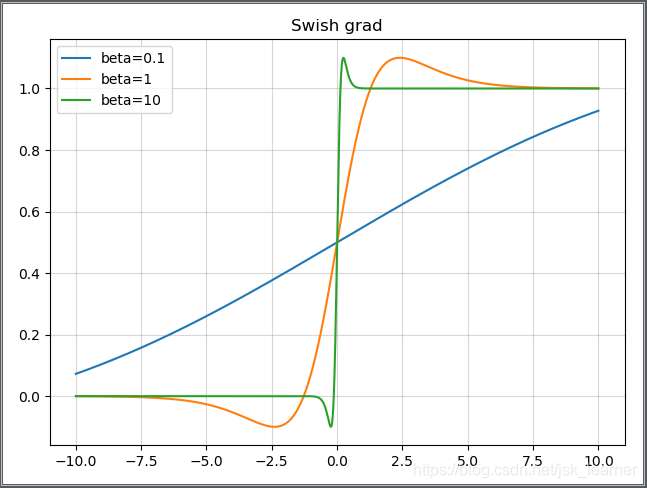

The author of MobileNetV3 used Hardswish & Hardsigmoid to replace the Sigmoid layer in ReLU6 & SE-block. It is much more expensive to calculate the Sigmoid function on a mobile device. Adding a hyperparameter to Swish makes the function expressionĬan be a constant or a trainable parameter.Īlthough this Swish nonlinearity improves accuracy, its cost is non-zero in an embedded environment. It is a reflection in the function by adding a few hyper-parameters and then showing more characteristics. , the general trend of the function is similar to ReLU but more complicated than ReLU. Because the saturation of the Sigmoid tends to cause the gradient to disappear, learn from the effect of ReLU, when The Swish was an activation function proposed by the Google team in recent years and was composed of the previous activation function. The SiLU activation function was also called the Swish activation function. Common activation functions include Sigmoid, Tanh, SiLU, Hardswish, Mish, MemoryEfficientMish, etc. To improve the computational performance of neural networks, people have studied the activation functions in neural networks. The function can realize the transmission of neuron information, so the activation function was an indispensable part of an artificial neural network. However, the neuron information of the previous layer cannot transfer to the neuron information of the next layer. Each layer without the activation function was equivalent to matrix multiplication. The activation function was an introduction to increase the nonlinearity of the neural network model. This function was the activation function. As shown in Figure 1, in the neuron, Input is also applied to a function after weighting and summing. The activation function obtains information from the front neuron and then transmits it to the next neuron. It helps the neural network to learn the complex patterns in the data, just like the neuron-based model in the human brain.

How to screen diverse cells quickly, efficiently, and accurately is one of the research fields of biomedicine.Īctivation functions were a critical part of the design of a neural network. The previous means have an application value for screening and identifying different cells types, but it was not perfect. Using artificial intelligence recognition technology is one of the methods to screening out different types of cells in biological blood for example, adopting machine learning and deep learning methods to screen diverse cells types.

The application of artificial intelligence technology in the biomedical field provided a critical research theory for the development of biomedical. It was one of the tasks of biomedical research to find out the cells in the blood, for instance, using some methods rapidly and accurately screening of red blood cells, white blood cells, platelets, etc. The blood of an organism contains many components.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed